AI Workflow Security Best Practices: How to Audit and Stress-Test for Data Leaks

Artificial intelligence is rapidly becoming the backbone of modern automation—from chatbots and content generation tools to data pipelines and decision-making systems. But as organizations rush to integrate AI into workflows, one critical issue is often overlooked: security.

AI workflows process vast amounts of data, including sensitive user inputs, proprietary business logic, and confidential outputs. Without proper safeguards, these systems can unintentionally expose or leak data—sometimes in ways that are difficult to detect.

This guide explores AI workflow security best practices, along with a practical framework to audit and stress-test your automation systems for data leaks.

Table of Contents

What Is AI Workflow Security?

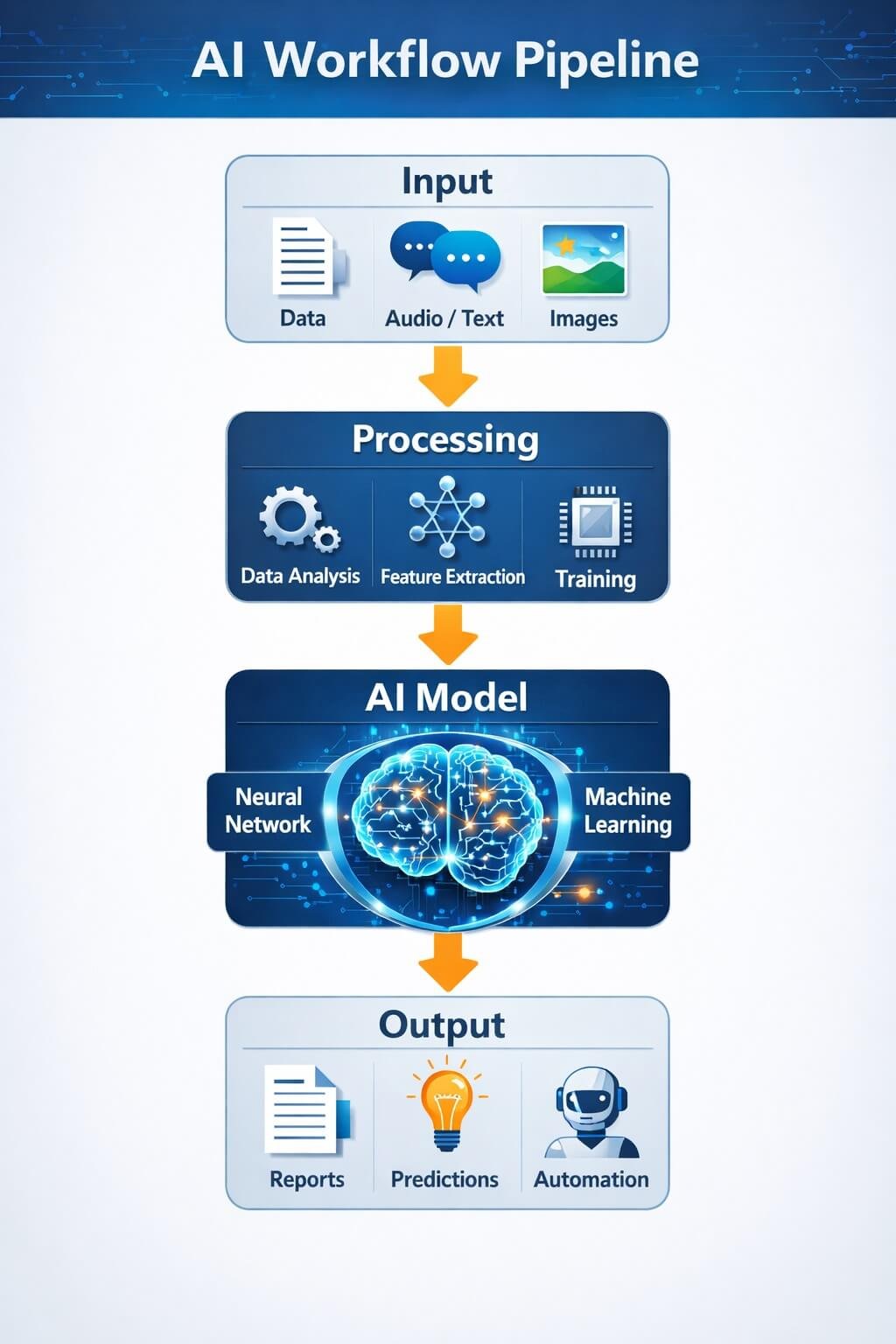

AI workflow security refers to the processes, controls, and technologies used to protect data and logic within AI-powered systems. These workflows typically include:

- Input sources (user prompts, APIs, databases)

- AI models (LLMs, ML models)

- Processing layers (automation tools, integrations)

- Outputs (responses, reports, actions)

Unlike traditional software systems, AI workflows are dynamic and probabilistic, meaning they can behave unpredictably. This makes them more vulnerable to new types of attacks and data leakage scenarios.

Top AI Security Risks That Lead to Data Leaks

Understanding risks is the first step toward prevention. Below are the most common vulnerabilities in AI workflows:

1. Prompt Injection Attacks

Attackers manipulate inputs to override system instructions.

Example: A user tricks a chatbot into revealing hidden system prompts or confidential data.

2. Sensitive Data Exposure in Outputs

AI models may unintentionally reproduce:

- Private user inputs

- Internal company data

- API responses

3. API Key and Token Leaks

Improperly secured APIs can expose:

- Authentication tokens

- Database credentials

- Third-party integrations

4. Third-Party Tool Vulnerabilities

Automation platforms (like workflow tools) may:

- Store logs insecurely

- Share data across integrations

- Lack strict access control

5. Data Retention and Logging Issues

Storing raw prompts or outputs can:

- Violate compliance rules

- Increase breach impact

- Expose sensitive history

6. Training Data Leakage

AI models trained on sensitive data may:

- Regurgitate confidential information

- Leak proprietary knowledge

AI Workflow Security Best Practices (Complete Guide)

This is the core of your defense strategy. Implement these best practices across every layer of your workflow.

1. Input Security

Validate and Sanitize Inputs

- Block malicious or unexpected input patterns

- Filter sensitive data before processing

Limit User Input Scope

- Restrict what users can ask

- Prevent system-level instruction overrides

2. Model-Level Security

Use Strong System Prompts

- Clearly define allowed behavior

- Prevent data exposure instructions

Limit Context Memory

- Avoid passing unnecessary historical data

- Reduce risk of accidental leaks

3. Data Protection

Encrypt Data

- Use HTTPS for all transmissions

- Encrypt stored data

Mask Sensitive Information

- Replace personal data with placeholders

- Avoid exposing raw inputs

4. Access Control

Implement Role-Based Access (RBAC)

- Limit who can access AI systems

Secure API Keys

- Store keys in secure vaults

- Rotate keys regularly

5. Output Monitoring

Filter AI Responses

- Detect sensitive content before output

- Apply moderation layers

Log Safely

- Store minimal data

- Avoid logging confidential content

6. Third-Party Risk Management

- Audit all integrations

- Review data-sharing policies

- Limit permissions for external tools

How to Audit Your AI Workflow for Vulnerabilities

A structured audit helps identify hidden risks.

Step 1: Map Your Workflow

Document:

- Inputs

- Processing steps

- Outputs

Step 2: Identify Data Entry Points

Where does data enter?

- User forms

- APIs

- External tools

Step 3: Trace the Data Flow

Track how data moves:

- Across systems

- Between tools

- Into storage

Step 4: Evaluate Storage and Logging

Check:

- What data is stored

- How long it is retained

- Who can access it

Step 5: Review Access Controls

Ensure:

- Only authorized users can access systems

- Permissions follow least-privilege principles

Step 6: Analyze Third-Party Integrations

Ask:

- What data is shared?

- How is it protected?

How to Stress-Test AI Systems for Data Leaks

Auditing is not enough—you need to test your system actively.

1. Simulate Prompt Injection Attacks

Try:

- Overriding instructions

- Extracting hidden prompts

2. Test Sensitive Data Exposure

Input fake confidential data and check:

- Does it appear in outputs?

- Is it stored anywhere?

3. Perform Red Team Testing

Act like an attacker:

- Try to break safeguards

- Identify weak points

4. API Abuse Testing

Test:

- Unauthorized access

- Token misuse

- Rate limits

5. Output Manipulation Testing

Check if:

- AI can be tricked into revealing restricted info

6. Logging and Storage Tests

Verify:

- Logs don’t contain sensitive data

- Storage is secure

AI Security Testing Tools and Techniques

You can use a mix of tools and strategies:

1. Automated Security Scanners

- Scan APIs and endpoints

- Detect vulnerabilities

2. Data Loss Prevention (DLP) Tools

- Monitor sensitive data movement

- Block unauthorized sharing

3. AI Red-Teaming Tools

- Simulate attacks

- Test model robustness

4. Monitoring and Alert Systems

- Track unusual activity

- Detect anomalies in real-time

AI Workflow Security Checklist

Use this quick checklist to evaluate your system:

✅ Input Security

- Inputs validated and sanitized

- Sensitive data filtered

✅ Data Protection

- Data encrypted

- Sensitive info masked

✅ Access Control

- RBAC implemented

- API keys secured

✅ Monitoring

- Outputs filtered

- Logs minimized

✅ Testing

- Regular stress tests

- Prompt injection simulations

Real-World Example of AI Data Leakage

Consider a chatbot integrated with internal databases. Without proper safeguards:

- A user could ask cleverly crafted questions

- The AI might reveal internal data

- Logs may store sensitive interactions

This scenario highlights why proactive testing and auditing are essential.

FAQs: AI Workflow Security Best Practices

What are AI workflow security best practices?

AI workflow security best practices include input validation, data encryption, access control, output monitoring, and regular security audits. These measures help prevent unauthorized access and reduce the risk of sensitive data leaks in AI-powered systems.

How do AI workflows leak sensitive data?

AI workflows can leak data through prompt injection attacks, insecure APIs, improper logging, or by unintentionally including sensitive information in outputs. Weak access controls and third-party integrations also increase the risk of data exposure.

What is a prompt injection attack in AI?

A prompt injection attack occurs when a user manipulates input prompts to override system instructions and extract hidden or sensitive information. It is one of the most common vulnerabilities in AI systems using language models.

How can I prevent data leaks in AI systems?

To prevent data leaks, validate inputs, encrypt data, restrict access, avoid storing sensitive prompts, and monitor outputs. Regular audits and stress-testing AI workflows also help identify and fix vulnerabilities before they become critical issues.

How do you audit an AI workflow for security risks?

Auditing involves mapping the workflow, identifying data entry points, tracing data flow, reviewing storage and logs, checking access controls, and evaluating third-party integrations. This process helps uncover hidden vulnerabilities in AI systems.

What tools can be used to test AI security?

AI security testing tools include automated vulnerability scanners, data loss prevention (DLP) systems, API monitoring tools, and AI red-teaming platforms. These tools help identify weaknesses and simulate potential attacks.

Are AI automation tools safe for handling sensitive data?

AI automation tools can be safe if properly configured with strong security controls such as encryption, access management, and monitoring. However, misconfigured systems can expose sensitive data, making regular audits essential.

Conclusion

AI workflows offer powerful automation capabilities, but they also introduce new security challenges. Without proper safeguards, these systems can become a major source of data leaks and vulnerabilities. If you implement strong AI workflow security best practices, conduct regular audits, and actively stress-testing your systems, you can significantly reduce risks and build more secure AI-driven automation.

The key is to stay proactive—because in AI security, prevention is always better than damage control.